Weekly Report 2021, August 9 - 15

Can you believe it? I’ve been doing this for a full month now! More deep dives this week. Mostly around making reference leak tests run on macOS, which I need to be able to check PRs locally before landing them. This will take at least another week to get right. I also made progress on gathering data from all Github PRs which will allow us to answer some interesting questions about our behavior as a team.

Issue stats for the week look as follows: 16 closed, 1 opened. PRs: 1 authored, 50 closed, 3 reviewed. Some of you asked about what the stats mean exactly so here’s a few clarifications:

- for PRs, “closed” might either mean merged or rejected, the vast majority are merged;

- for issues, “closed” might either mean fixed or “wontfix”, the vast majority are fixes;

- fixing an issue in this context most often doesn’t mean I fixed it myself but rather that it got fixed through PRs I merged and after all due process there was nothing else to do on the issue so I closed it;

- some PRs by other contributors need changes to merge and often I do them myself to streamline things – I don’t count those as “authored” by myself;

- if I author a PR one day and close it the same day, it will only show up as “authored” in the stats;

- if I author a PR one day, it takes a few days to review (usually with some back and forth discussion and changes) and it’s closed after that, it will show up both as “authored” and “closed” as that was the reality of the situation;

- if I review the same PR a few times in the same week, it’s only counted once.

Highlights

What are all those people doing?

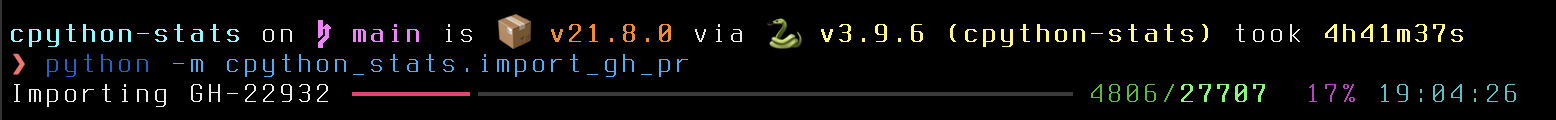

This week I wrote an importer for data from all Github PRs on python/cpython. This is part of a larger project around discovering which parts of CPython need the most help. I don’t have any conclusions yet, we’re currently at the data gathering stage. Downloading all relevant information from Github with their rate limits took over 40 hours, here it is mid-run:

While this was running, I worked on an equivalent importer from our Git history. That’s not finished yet. The interesting part will be combining both datasets which will allow answering the following questions, among others:

- which parts of the project get the most changes;

- which parts of the project get the most review;

- which parts of the project are the slowest to merge a proposed change;

- related to the above: which parts of the project are least likely to receive any core developer attention.

Of course, having this locally will also allow us to get answers for new questions as we ask them. But the ones above are of much interest to the Steering Council so we’ll be focusing on that first.

By the way, the Github PR importer is a rather primitive script using a bunch of dataclasses stored using shelve. The file takes up a little over 104 MB on disk. I wrote custom dataclasses instead of using the raw JSON responses from the Github API because I wanted to make sure I’m storing everything that I want, not just things that happen to be returned. That worked well, although I will need a few tweaks to enable efficient updating of existing data without essentially redoing the import from scratch every time.

I’m aware that the dataclasses I wrote don’t lend themselves too well to OLAP. The point was to get the data in a rich format locally first and then to figure out how to best denormalize them for analysis. That’s also why I opted for using shelve which, while very limited, is just the simplest API to persist many objects for “a while”. Yes, I know the pickle-related caveats, but this isn’t meant to be long-term storage.

While looking for a better end format, I discovered Simon Willison’s sqlite_utils which greatly simplify data import to SQLite. This will come in handy as I’d like to publish the entire dataset in form of a .sqlite file that we can then slice and dice with Datasette. That will be the long-term storage for this.

It hangs when there’s nothing else to say

I dealt with two interesting issues this week that end up freezing the running program.

For context, I want to be able to run the full Python test suite on macOS with the refleak finding machinery. There’s a few tests that fail here, and this week we managed to get rid of one of the failures. There was a 30-month old PR that I finished and merged which solves the following problem on macOS:

>>> import pty

>>> pty.spawn([sys.executable, '-c', 'print("Hello, world!")'])

Hello, world!

[never returns]

That PR was itself an updated version of the original suggested fix that is now more than 4 years old. I hope the residency will decrease the amount of such cases.

I discovered there’s quite a few more issues with PTY on macOS, I’ll be looking into fixing some of those later as well.

It hangs when you run it twice

This one is related to the refleak runs on macOS as well. It turns out that the following hangs, try it yourself if you happen to run on Apple hardware:

❯ python3.9 -m test test_builtin test_builtin -v

The hang goes back to at least 3.6, I haven’t checked 3.5 and older. It took me a long while to investigate, and it looks like the issue is somehow related to readline. I haven’t found the root cause yet since I was afraid that without time boxing I could have easily spent the rest of the week chasing this rabbit.

And what a fun rabbit that was! A Heisenbug that never showed up for us in CI because the test was skipped if stdin wasn’t a TTY. And it wasn’t a TTY because we run the tests in parallel using the -j option which uses multiprocessing. This is especially important for refleak tests that can easily run for multiple hours if executed sequentially with no parallelism.

Fix a segfault, discover an extra refleak

I don’t have much to do with this one personally but I thought to mention this, too. Pablo fixed a 3 year old segfault this week and after merging it, I discovered that sometimes test_exceptions would now leak 2 references. After some initial investigation, I was done for the day but fortunately Irit took over and found a much smaller reproducer.

We’re still looking into the root cause here, non-deterministic errors are often the hardest to find. We suspect this to be one of the cases where an unrelated change exposes a refleak that was always there. They’re rare but do happen.

Plans for next week

I only barely touched on the plans I originally had for this week! Things are moving in the right direction though, for instance fixing BPO-44887 is about running tests with -R: on macOS after all. I’ll have to finish that next week. The end goal ultimately is to enable running -R: CI jobs on all PRs, but only for tests relevant to files touched by the PR.

I also want to fuzz Jack’s GH-27434 which looks like a promising fix for an edge case in unittest visual diffing performance.

Oh, and by the way, you know that I’m working remotely from Poland, right? So next week I’ll be working remotely remotely, coding from the Polish seaside. Gotta get that vitamin D while I can. Winter is coming.

Detailed Log

Monday

Issues:

PRs:

- closed pull request GH-21470

- closed pull request GH-27674

- closed pull request GH-27675

- closed pull request GH-27650

- closed pull request GH-27681

- closed pull request GH-27682

- closed pull request GH-27658

- closed pull request GH-18034

- closed pull request GH-27684

- closed pull request GH-27687

- closed pull request GH-18272

Tuesday

Issues:

- closed issue BPO-39498

- closed issue BPO-44872

- closed issue BPO-14853

- closed issue BPO-33349

- closed issue BPO-44854

PRs:

- closed pull request GH-27696

- closed pull request GH-27699

- closed pull request GH-27699

- closed pull request GH-27691

- closed pull request GH-27692

- closed pull request GH-27690

- closed pull request GH-25026

- closed pull request GH-27626

- closed pull request GH-27704

- closed pull request GH-27703

- closed pull request GH-27705

- closed pull request GH-27707

- closed pull request GH-27698

- closed pull request GH-27638

- closed pull request GH-27712

- closed pull request GH-27713

- closed pull request GH-27714

- reviewed pull request GH-27434

- reviewed pull request GH-27709

- reviewed pull request GH-27678

Wednesday

Coding day. Mostly hunting test_builtin hangs on macOS.

Issues:

PRs:

- authored pull request GH-27721

- closed pull request GH-27717

- closed pull request GH-27730

- closed pull request GH-12049

- closed pull request GH-27711

- closed pull request GH-27724

- closed pull request GH-27723

- closed pull request GH-4167

Thursday

Coding day. GitHub PR importer.

Issues:

- closed issue BPO-26228

PRs:

Friday

Issues:

- closed issue BPO-30077

- closed issue BPO-33930

- closed issue BPO-44891

- closed issue BPO-44873

- closed issue BPO-36700

PRs:

- closed pull request GH-27746

- closed pull request GH-27747

- closed pull request GH-27749

- closed pull request GH-27753

- closed pull request GH-27736

- closed pull request GH-27745

- closed pull request GH-27754

- closed pull request GH-27700

- closed pull request GH-27756

- closed pull request GH-27757

- closed pull request GH-27758

- closed pull request GH-27759

- closed pull request GH-24449

- closed pull request GH-27660

- reviewed pull request GH-27434